Task Force Feature: Age of the Electron Part II

The God of Efficiency is dead, and electrification has killed it.

Greetings to the DER Task Force! We are back with another featured post this week, this time from James McGinniss. We are re-upping his piece on how DERs enable resiliency over efficiency and invert the paradigm underlying today’s grid. You can check out [[ the piece ]] and his other excellent writing at his Substack. We hope you enjoy!

P.S. We are having our next NYC meetup in Brooklyn Wed 7/13. RSVP here if you plan to attend!

Transitioning from the Hydrocarbon Age to the Age of the Electron requires completely reframing how we build and interact with our energy systems. Burning fossil fuels for heat, electricity, and transportation (whether in a car, power plant, or boiler) is a fundamentally different process than using AC or DC power to drive motors or photovoltaics and wind turbines to generate electricity. As the tools we use change their mechanism for energy conversion and consumption, the electricity grid that feeds them will go through a paradigm shift as well.

This shift of end-uses of electricity to more critical tasks like heating our houses and charging our cars means the resiliency of local grid infrastructure will become more important than how “efficiently” we build our bulk grid. Most power outages today result in some minor inconveniences: you bust out candles and flashlights and realize that you may lose a few days of groceries in the fridge. But in the future, the dependence of mission critical uses like heat, internet connectivity, and transport on electricity makes losing power far more dangerous. The problem is that, today, our grids are designed such that they will continue to fail, despite our best efforts to harden them.

This need for local power sources and resilience will lead to the proliferation of Distributed Energy Resources. DERs is a category of new technologies ranging from electric vehicles, smart thermostats like Nest, battery storage, rooftop solar, backup generators, heat pumps, electric water heaters, and more. These so-called DERs are distinguished by the fact that they are digitally enabled, small, modular, and owned by end-consumers, not utilities, and can shift your power consumption to times when it’s most available and cheapest. Most importantly, some of them can store or generate power in your home, so if the grid goes down, your lights stay on. When considering all of their benefits in aggregate, it is easy to understand how a DER-heavy grid will be better than the system we currently have.

For the last 80 years, the grid was built around wholesale market and centralized power plant operational uptime in mind, also known as reliability. By contrast, it was not built to factor in the importance of individual nodes (like your house) staying active on the grid, also known as resilience. In order to build efficient, combustion-based generators, we needed to build them large enough to reach economies of scale and as a result, extensive transmission and distribution networks to transport power along with them. Those wires are vulnerable to being knocked out by storms (or even squirrels), leading to blackouts, and high degrees of interconnectedness are vulnerable to cascading failure via supply shortages. That was the deal we made: reliability and efficiency of bulk generation and transport over the resilience of local nodes in times of crisis. But in the Age of the Electron, inverting market design to value resilience over reliability will lead to a superior electricity grid.

Centralized grids will never not fail

Every time the grid fails, experts offer rationale for the outages such as market design, poor regulation, lack of weatherization, and more. But these analyses continually miss the forest for the trees and fail to address that the underlying system design across each of these markets is functionally the same. Our grids are highly centralized, and will continue to fail regardless of our best efforts at planning for worst case scenarios. There is no stopping these outages, and there are more to come.

Over a six month period in 2020-21, we witnessed three distinct grid failures in three different regions and climates of the US (CA, NY, TX) across three different market structures (centrally-planned, capacity, and energy, respectively) and for three different reasons (wildfires, wind, and cold, respectively). In August of 2020, California grid operators had to institute rolling blackouts due to a power supply shortage during a heat wave, and soon after had to shut down power to customers to deal with the risk of wild fires being started by the distribution grid (two separate reasons for blackouts!). In NY in August 2020, Hurricane Isaias knocked down enough wires that some customers were left without power for 7–10 days (an event like this happens most years). In February of 2021, a supply shortage in generation due to a variety of factors—but mainly a natural gas shortage—left Texans without power for multiple days in frigid temperatures, leading to untold damage and 246 deaths.

Whatever the cause of the recent and future outages, their frequency is expected to increase as climate change brings more extreme weather events, intermittent solar and wind present challenges in firm generation supply, and we continue to underinvest in an aging grid. Furthermore, centralized power grids in an electrified economy present an existential risk, so the danger these outages present are only going to increase.

Centralized grids are becoming single points of failure for an electrified society

We take for granted that our current energy — not electricity — infrastructure that supports fossil fuel driven processes is, for the most part, remarkably distributed and resilient because of our ability to locally store and convert these fuels cheaply. Boilers and cars are devices that turn potential energy (fuel) into work, or actions that are useful to us. To do this work, we store fuel in propane tanks in backyards, gas tanks in cars, and storage tanks underneath gas stations until needed. This means that we typically have days or weeks worth of fuel to heat our house if it’s cold when the power goes out (although most boilers need power to run). It’s also easy to go to a nearby gas station using a jerry can to lug fuel back for a diesel generator or car, so we’re never truly stranded if cars run out of fuel. When was the last time we legitimately could not do these things because there was no fuel? The 70's? That was one of the worst existential crises our real economy has faced since the Great Depression. On the other hand, outages are a regular occurrence on the electricity grid: nodes, until now, couldn’t function individually, unlike how cars and boilers can.

Meanwhile in the Age of the Electron, power is becoming increasingly central to our daily lives, meaning outages will become deeply problematic. On an electrified grid, if power lines in your neighborhood are taken out by falling trees, you won’t have heat and potentially no ability to drive anywhere. Your nearby EV charging station won’t have power either, so there’s no jerry can to save you. Furthermore, local municipal resources like bus lines, snowplows, and utility trucks to fix the lines may be handicapped. It could be days—if not weeks—without heat or any ability to move around, and supply chains may even be disrupted. You won’t be able to work because your router doesn’t have power and you can’t charge your computer. Your best hope is that there are some community centers with microgrids nearby, or you were smart enough to install a generator or battery in your home.

This is exactly what it looked like in Texas during Winter Storm Uri, primarily because Texans rely heavily on electricity for heat. Although the northern U.S. is better prepared for winter weather due to its reliance on gas heating, the kinds of challenges faced by Texas will soon happen everywhere on a fully electrified grid. What’s more, during Uri the natural gas system was actually the primary cause of power failures, as power plants didn’t have enough gas to run due to shortages and pipes freezing, so it’s not as if today’s fossil-fuel based system is perfect, either.

Regardless, as heat and transportation (and even industrial processes) transfer from gas distribution to electricity, we will be more exposed than we are now, since power grids do fail more frequently than gas systems. What matters is that all of these centralized systems are designed in such a way that it is impossible to build a perfect defense against all possible tail events. So these outages are just the beginning; we need a radical new way of building our energy systems, and particularly power grids. Luckily, DERs offer us the potential to build a better energy future than the power and gas systems combined.

A decentralized electricity grid will be superior to all prior energy systems

The future grid will be built from the bottom up, driven by new technologies, DERs, that are in many ways vastly superior to their predecessors. DERs such as rooftop solar, distributed wind (while not as popular today, they have been used by farming communities in the past), and geothermal heat pumps represent the first time in history we can cheaply generate energy independently and on a small scale, anywhere. All combustion-driven processes rely on a distribution network to benefit consumers, like natural gas pipelines or diesel and propane trucks. So while we can store these fuels easily, we cannot self-produce them, unlike with DERs. This idea is still greatly under-appreciated.

In order to accomplish the same thing a solar panel can, an individual would need to buy a generator, build an oil derrick, and have a refinery on site to be truly self-sufficient. With solar, it is all three of those in one; it produces power whenever there is sun, which can be directly used by onsite appliances. The same is true of geothermal heat pumps, which provide a constant, independent source of heating or cooling. Furthermore, electric vehicles and stand-alone storage are starting to get effective enough at providing stored power onsite cheaply, like their fossil-fuel predecessors. What this amounts to is that a home with solar, storage, a geothermal (or stand-alone) heat pump, and an EV will soon actually be more resilient than a home with a natural gas boiler.

These new technologies have arrived just in time, as the twin problem of electrification and increasing outages requires policy makers, grid operators, and power companies alike to rethink grid planning. This is a marked departure from the grid as it looks today.

DERs differ drastically from thermal plants, and thus the grid must look different too

Our grid was built in a centralized manner because of technological constraints that no longer exist. In the past, our power generation sources relied on combustion to drive them, so building larger and larger centralized power plants would lead to maximum thermodynamic efficiencies at scale, and thus would yield the cheapest power. This then required large transmission and distribution (T&D) networks to transport the power from low population density areas, generally where there was cheap land to build power plants, to high density areas like cities. This introduced the grid’s fundamental, unavoidable weak point that cannot be designed away: wires. We did what we could with the technology we had, and structured our markets in a particular way as a result. Edge users that needed constant uptime like hospitals, data centers, etc all had to pay for resilience via onsite generators. Building onsite resilience was considered redundant to the bulk grid and thus was deemed “inefficient”.

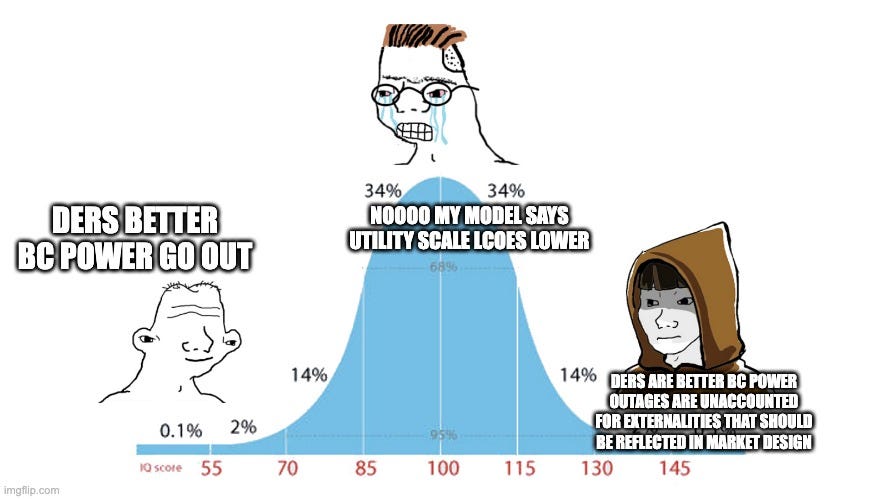

But DERs are changing the underlying techno-economics of the grid, so grid planning won’t be far behind. The primary difference is that solar and batteries have the potential to be cost competitive with utility scale on a per unit basis, unlike their thermodynamic predecessors. This is because solar panel and battery storage efficiency does not scale with size, and thus we don’t need to seek the economies of scale we used to. While in the US a wholesale solar farm in say, West Texas, will currently produce power for less than the rooftop panels seen in the image below on a $/kwh basis, this does not have to be true. For example, in Australia, rooftop solar is $1219/kw and utility scale is $1061/kw, whereas in the US it’s $3520/kw ($4,236/kw in CA) and $1101/kw, respectively, due to high costs from slow bureaucratic processes like permitting that exist in the US. If treated as a wholesale power plant alone (and we could slash red tape in the US), utility scale would have a slight edge over rooftop solar, but considering the fact that rooftop solar doesn’t need to be transported very far, the return it ends up receiving can be higher than utility scale because it is being compensated for lessening the need for extensive poles and wires. This is also without factoring the value of resilience to end users. These under-appreciated benefits make it seem like modular rooftop solar is a worse-returning asset for grids than large-scale renewables, when in fact the opposite may be true.

Viewing DERs as redundant to the bulk grid is thus a mistake, since they can do what bulk power plants can’t do. Current market structures don’t recognize this fact, and as a result compensate them in a haphazard way, if at all. DERs provide three core values to the grid: they act as wholesale power plants (individually or aggregated) by providing energy and capacity (or other ancillary services) to markets, they reduce our need for the poles and wires by being at or closer to demand, and they provide resilience to the end user. Markets today are starting to get better at being more explicit about the first two (like VDER in NY), but none still are effective in the third. Most importantly, large, bulk power plants can’t provide the second and third value. Since the these new, modular technologies provide added benefits to the grid that large plants can’t and their very nature is such that the unit economics are for the most part independent of scale, the grid will become distributed. It’s a techno-economic inevitability.

Market frameworks must adapt to create a clearing price for resilience

Since the techno-economics of DERs will drive a more distributed grid, our market frameworks should adapt to make sure they are being compensated fairly. The first step in this proliferation of DERs will be to give them access to markets to act like a big power plant as well as compensate them for the avoided T&D build out benefits they bring. This is using the existing framework as best we can. From there, we will be able to start considering new frameworks that appropriately compensate DERs for the resilience they offer in order to start fully accounting for the value they bring. This would require addressing the true costs of outages that occur both from supply shortages and from T&D outages, which markets today are not equipped to do. There are currently very few markets in the US that quantify these deferred T&D costs effectively, so consumers still need better price signals to fully recoup the costs of their investment. Furthermore, customers seeking resilience today must implicitly price the externalities of power outages, with no help from the power market. Thus, what’s so striking about DERs is that if markets are designed effectively, they have the potential to actually be economically superior to utility scale plants.

Even if large power plants and efficient bulk power markets seem cheaper for a while, when these tail events occur, they reveal enormous, previously hidden costs—the cost of resilience. In the case of Winter Storm Uri in Texas, the average price of power in the time period 2010-Feb 2021 in Texas jumped from $30.5/mwh to $43.6/mwh. That is, a three-day event raised average prices by 42% for an entire decade. So not only did markets become less efficient (more expensive) when the tail event occurred, the economic losses or externalities of users VOLL aren’t even reflected in this price jump, which totaled anywhere from $100billion to $300billion across the state. Ironically, the only wholesale market that somewhat tries to address this is ERCOT, through the concept of the Value of Lost Load (VOLL), which is a customers’ willingness to pay to avoid an outage and should theoretically match the cost of damages from the storm.

When grid planners forced outages during Uri, they eliminated the users’ ability to decide to pay more for power, even if their willingness to pay was higher than the wholesale market price cap. ERCOT had set the price cap of markets at $9,000/mwh, even knowing that many users VOLL is as high as $42,000/mwh. If an outage is occurring from a supply shortage, rolling outages should thus be based on users’ willingness to pay, because the costs of losing power are borne by that user anyways—a user with a VOLL of $42,000/mwh is better off paying $20,000/mwh than being shut off at $9,000/mwh. This however, would require utilities to be able to route outages at individual meters, and for every customer (or their provider) to understand demand response. The former is already a feature we have the technical capabilities to implement and being pursued by grid planners in areas like Texas, while the latter is growing rapidly through a variety of innovative new business models bringing more demand response solutions to market.

If everyone could participate in demand response, markets wouldn’t need price caps, which are effectively a redistribution of costs from users with VOLLs below the cap to those with VOLLs above them. Admittedly, if the price cap is sufficiently higher than most individual VOLLs, users still have enough incentive to invest in DERs, but it essentially caps the returns on their investment at the spread between their VOLL and the cap, weakening the signal and pushing the true costs of outages external to power markets. While eliminating price caps (again contingent on widespread demand response) may sound radical, remember that users are paying these costs external to the market anyways, and that electricity would just start looking like all other commodities where customers actually respond to prices. How many other commodities do have price caps? Doing so would create a more explicit clearing price for resilience and users would be able to more efficiently measure the tails and deploy capital to protect against them. Because they can understand their individual VOLL, they can see how frequently the market would go there, and invest on onsite capacity via DERs accordingly. Counter-intuitively, this would be more fair to users, not less so, as it would allow them to properly price and receive payouts (during high price events) for insurance for themselves by eliminating the opaqueness of the externalities of outages.

VOLL, however, does not address the other type of outage that can occur from delivery of the power itself. In the current paradigm, because poles and wires are an unavoidable design feature, outages from supply shortages are seen as a market failure, but outages from wires getting knocked down are seen as just the unfortunate reality of the grid. Because of this, resilience isn’t really seen as an attainable goal—who can you hold accountable for hurricanes knocking wires over time after time? It’s admittedly hard to promise it won’t happen to customers and price the service as a result. Thus, it’s not enough for grid planners at wholesale level to take a more rigorous approach to VOLL. The price of power could be determined more efficiently, but that doesn’t mean users could have guaranteed delivery of it. Thus, trying to create clearing prices for resilience would force us to look at the wires side of the equation too.

An example of how this could work would be focusing on resilience-driven Service Level Agreements (SLAs) such as endpoint uptime between users and power providers or utilities. Given that promising resilience requires both procuring sufficient power and delivering it, this may actually lead to a re-emergence of vertically-integrated T&D infrastructure and power suppliers. If this too sounds scary, consider that DERs inhibit the formation of natural monopolies in distribution infrastructure, so vertical-integration doesn’t necessarily imply regional monopolies, as it does today. And in fact, this already exists. Microgrid developers are essentially vertically-integrated providers, because they own both generating resources and delivery infrastructure. Their contracts are frequently structured as SLAs, too. It just happens to be behind the meter, and the delivery infrastructure isn’t very extensive. Thus, this future will naturally emerge as the number of microgrids and DERs increase. At a certain point, interconnecting disparate systems, particularly in rural areas, may make sense. Far from leading to lots of off-grid users, one could imagine simply a lot more, smaller T&D and generation owners that are interconnected with each other using the common standards (enforced by PUCs) that we already have developed today, and did not exist when vertical integration originally emerged in the early 20th century.

Regardless of how it looks—the above discussion is a thought experiment—by looking at endpoint uptime as a key metric instead of wholesale market uptime, we would start to approach markets with an entirely new framework. And with proper market design that effectively incorporates the currently hidden externalities into power market price signals, DERs will lead to a cheaper grid. Most importantly, the debate around the costs of DERs vs. centralized power plants ignores this reality, and the simple proof of this is that many people buy DERs for resilience, whether they “pencil out” financially or not. Until now, average users didn’t have as much of a need for local resilience or a choice of how to acquire it, so they primarily focused on cost too. But now that these options are available, the centralized grid continues to fail, and electrification is making resilience more valuable, more and more users will adopt DERs each time there is a new outage, whether or not they deliver savings or our markets compensate them properly. Since individual actors are adopting these solutions on their own today, we don’t need to wait around for markets to adapt. This reveals a core truth about the power of a bottoms-up, distributed grid in contrast to the top down models we have today: individual actors are implicitly pricing these hidden externalities, and will do that far more effectively than any central planner focused on “efficiency” ever could. It would be a lot better for everyone if markets reflected this reality.

Conclusion: Resilience for the win

Resiliency is the killer application of DERs. One need look no further than how the search traffic from users asking “how can I install a battery in my house?” spikes after wildfires in CA, hurricanes in NY, or big freezes in Texas once again knock out the power grid. This is a now universal problem and overtime resiliency benefits will lead to more and more users adopting these superior technologies, especially as electrification increases the value of resilience. As more individual users adopt, the collective will benefit.

What this means is that we shouldn’t care if the overall costs of a distributed system are higher than a centralized one (which likely isn’t even true). Asking about average levelized power costs is just the wrong question, as it frames the debate in the old paradigm’s terms. The right questions are how do we organize our energy systems to fulfill individual needs? How do we provide value? How do we make sure lower income communities get microgrids too? How do we lower the costs of T&D build out, and not just energy supply costs? A new paradigm for market design will emerge out of answering those questions effectively. And as resilience and efficiency are antitheses of each other, I’m arguing that in the future, one is more important than the other. This is not to say that the grid will become hyper fragmented. Bulk power plants like nuclear, hydro, geothermal, solar, and wind will all play their role, but the underlying architecture of institutional powers on the grid must change.

While in the past the God of Efficiency confidently decreed that “reliability” mattered more than individual or distribution grid (local) outages, or “resiliency”, the future grid will invert that relationship. Micro resilience over macro reliability. Local redundancy over bulk efficiency. Safety—which is what customers value anyways—over cost. And as the grid continues to fail, users will show how obvious this choice really is.